The modern web is a vast, ever-changing ecosystem of data. From pricing intelligence and market research to SEO monitoring and brand protection, organizations depend on efficient data extraction to stay competitive. At the center of this data revolution lies one essential component: the web scraping proxy network platform. Without it, even the most sophisticated scraping tools would be quickly blocked, rate-limited, or banned.

TLDR: A web scraping proxy network platform is a distributed system that routes scraping traffic through diverse IP addresses to avoid detection and blocking. Its architecture typically includes proxy pools, rotation engines, request routing layers, monitoring dashboards, and security controls. Key features such as automatic IP rotation, geo-targeting, session management, and real-time analytics make these platforms powerful and scalable. Understanding how these components work together is essential for building reliable and ethical scraping operations.

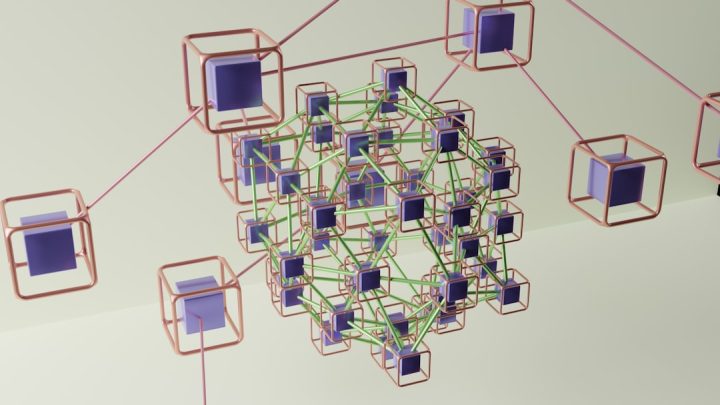

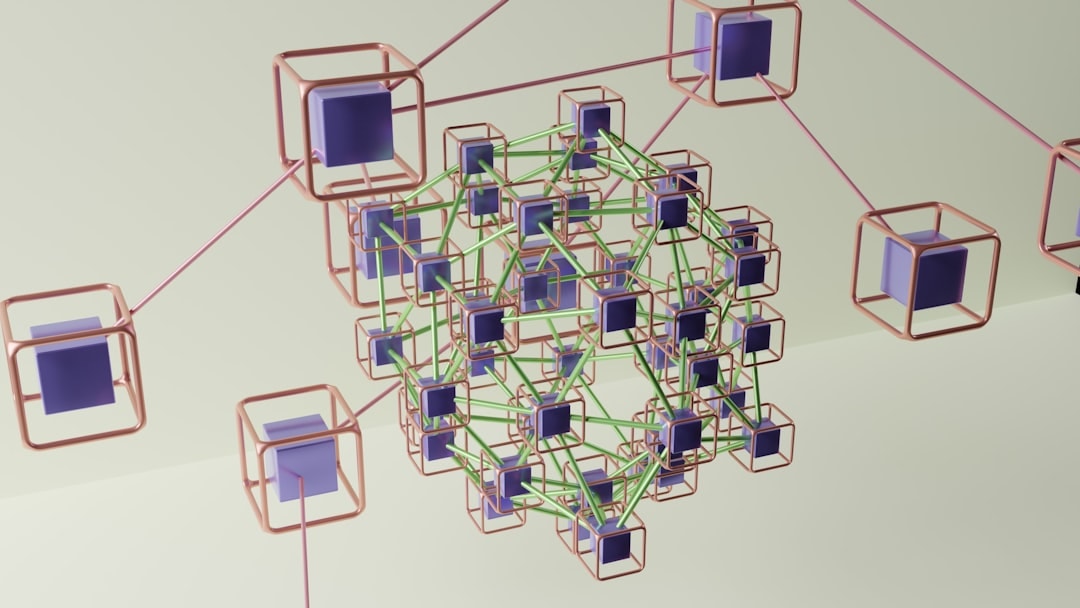

A proxy network platform acts as an intermediary layer between your scraping application and target websites. Instead of sending requests directly from a single IP address, traffic is routed through a pool of geographically distributed IPs. This approach mimics natural user behavior and significantly reduces the likelihood of detection.

Understanding the Core Architecture

At a high level, a web scraping proxy network platform is built on distributed systems principles. Its architecture balances scalability, redundancy, performance, and anonymity. Below are the core architectural components:

1. Proxy Pool Layer

The proxy pool is the foundation of the platform. It consists of thousands or even millions of IP addresses sourced from different networks and geographies. These IPs generally fall into several categories:

- Datacenter Proxies: Fast and cost-effective but easier to detect.

- Residential Proxies: Real IP addresses assigned to homeowners; more trusted by websites.

- Mobile Proxies: IPs assigned by mobile carriers; highly resilient against blocking.

- ISP Proxies: Static residential IPs hosted in data centers.

A healthy proxy pool continuously refreshes its IP inventory to remove banned or low-quality nodes while adding new ones.

2. Traffic Routing Engine

The routing engine decides which proxy is assigned to each outgoing request. It evaluates factors such as:

- Target domain sensitivity

- Geographic requirements

- Historical success rates

- Latency and uptime metrics

This intelligent distribution ensures optimal performance while minimizing detection risk.

3. IP Rotation System

One of the most critical components, the IP rotation mechanism, automatically changes IP addresses at predefined intervals or per request. Rotation strategies may include:

- Per-request rotation

- Sticky sessions (maintaining the same IP during a session)

- Adaptive rotation based on response feedback

This flexibility allows scrapers to adapt to various website defenses.

4. Control Dashboard and API Layer

Users interact with the platform through a web dashboard or API. These interfaces provide:

- Usage analytics

- IP geolocation controls

- Session configuration

- Authentication management

The API layer is particularly important for automation-heavy environments where large-scale scraping workflows are orchestrated programmatically.

5. Monitoring and Health Checks

Continuous monitoring ensures each IP remains functional and responsive. Health metrics include:

- Response time

- Block rate

- Error codes

- Bandwidth consumption

This data feeds into optimization algorithms that dynamically reroute traffic away from problematic nodes.

Key Features That Define a High-Quality Platform

Not all proxy network platforms offer the same capabilities. Advanced systems distinguish themselves through a set of critical features designed for reliability and stealth.

Automatic Geo-Targeting

Many scraping operations require access to location-specific content. Whether collecting localized pricing data or verifying ads in different countries, geo-targeting allows users to:

- Select country-level IPs

- Drill down to state or city targeting

- Filter by ASN or carrier

This feature is especially useful in ad verification and e-commerce intelligence campaigns.

Session Persistence

Certain workflows such as account management or cart simulations require multiple requests from the same IP address. Session persistence enables:

- Stable login sessions

- Multi-step form interactions

- Checkout simulations

Without session persistence, websites may flag inconsistent IP behavior.

Captcha and Block Handling

Some advanced platforms integrate automatic retry systems or CAPTCHA-solving integrations. When a block occurs, the system can:

- Switch IP addresses instantly

- Trigger headless browser fallback

- Log the incident for analysis

This automation reduces downtime and human intervention.

Scalability and Load Balancing

Enterprise scraping campaigns can involve millions of requests per day. To maintain performance, platforms incorporate:

- Load-balanced proxy gateways

- Distributed server clusters

- Failover routing mechanisms

These architectural safeguards ensure stable throughput, even during peak loads.

Security and Authentication

Security is a two-way street. Platforms protect both users and proxy owners through:

- IP whitelisting

- Username and password authentication

- Encrypted connections (HTTPS/SOCKS5)

- Usage limits and abuse detection

Secure authentication prevents unauthorized traffic and preserves proxy integrity.

Types of Proxy Networks: Comparison Chart

Different use cases call for different proxy types. The table below compares the most common categories:

| Proxy Type | Speed | Detection Risk | Best For | Cost Level |

|---|---|---|---|---|

| Datacenter | Very High | High | High-volume scraping, low-security targets | Low |

| Residential | Moderate | Low | Ecommerce, search engines, social media | Medium |

| Mobile | Variable | Very Low | Account management, strict platforms | High |

| ISP | High | Low | Long sessions and stable identity needs | Medium to High |

The Role of Automation and AI

Modern proxy network platforms increasingly integrate machine learning and predictive analytics. AI-driven traffic optimization can:

- Predict which IPs are likely to get blocked

- Automatically adjust rotation intervals

- Identify abnormal response patterns

By analyzing billions of requests, these systems continuously refine routing decisions to maintain optimal success rates.

Ethical and Legal Considerations

While proxy platforms are powerful, they must be used responsibly. Organizations should:

- Respect website terms of service

- Avoid scraping personal or sensitive data without permission

- Comply with data protection regulations such as GDPR

- Implement rate limits to reduce server strain

Ethical scraping practices help maintain trust and reduce legal exposure.

Building vs. Buying a Proxy Network

Some enterprises choose to build internal proxy networks, while others rely on established providers. Building in-house offers:

- Full infrastructure control

- Customized routing algorithms

- Potential long-term cost advantages

However, it also requires:

- Extensive maintenance

- Continuous IP acquisition

- Advanced DevOps expertise

Buying from a vendor provides instant scalability and managed maintenance but at recurring subscription costs. The best choice depends on technical resources and business scale.

Future Trends in Proxy Network Platforms

As websites deploy more advanced anti-bot systems — including behavioral fingerprinting and AI-based detection — proxy platforms must evolve. Emerging innovations include:

- Browser fingerprint management integration

- Headless browser proxy combinations

- Behavioral simulation engines

- Edge computing proxy nodes

The next generation of platforms will likely blur the line between proxy services and full-fledged scraping orchestration systems.

Conclusion

A web scraping proxy network platform is far more than a simple IP masking tool. It is a complex, distributed architecture designed to balance anonymity, scalability, and performance. From proxy pool management and intelligent routing engines to AI-driven optimization and real-time monitoring, each component plays a strategic role.

For businesses dependent on public web data, understanding this architecture is not just technical curiosity — it is a competitive necessity. Choosing the right platform, configuring it effectively, and using it ethically can transform raw internet data into actionable intelligence while maintaining operational resilience in an increasingly guarded web environment.